How I got a perfect Lighthouse score with Astro and Claude Code

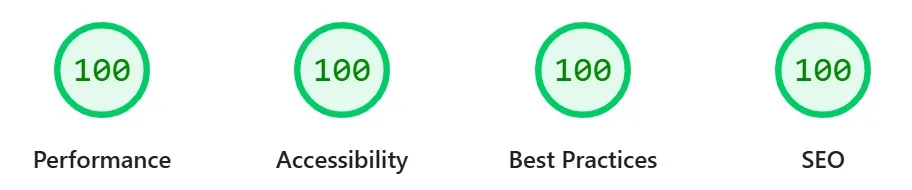

I replatformed my site from Webflow to Astro using Claude Code. The result: 100 across Performance, Accessibility, Best Practices, and SEO.

I replatformed aiagentstrategy.com from Webflow to Astro this week. The entire thing was built with Claude Code.

After deploying, I ran a Lighthouse audit. The result: 100 across all four categories. Performance, Accessibility, Best Practices, and SEO.

I didn’t set out to chase a perfect score. It just happened to land there because of how the site was built.

What Claude Code actually did

Claude Code handled the full build. The Astro project setup, Tailwind CSS integration, component structure, content pipeline, and deployment config for Vercel. I directed the work, made decisions on structure and design, and Claude Code executed.

A few things that contributed to the score:

Static-first architecture. Astro generates static HTML by default. No JavaScript bundle shipping to the browser unless you explicitly need it. That’s a huge performance win out of the box.

Semantic HTML. Claude Code writes clean, semantic markup. Proper heading hierarchy, landmark elements, alt attributes. The kind of stuff that scores well on accessibility and SEO without extra effort.

Accessible color choices. The initial build had one contrast issue: muted text that didn’t meet WCAG AA standards. I ran the Lighthouse report, told Claude Code about the failing elements, and it fixed the color values in one edit. That took the Accessibility score from near-perfect to 100.

Redirects for the old site. Since this was a replatform from Webflow, there were indexed URLs that needed redirects. Claude Code set up the vercel.json redirect rules to cover every old URL pattern. Clean redirects help preserve SEO equity during a migration.

What I learned

The thing that stood out to me wasn’t the score itself. It’s that I didn’t have to think about most of this.

I didn’t manually configure performance optimizations. I didn’t audit my HTML structure for accessibility. I didn’t research Lighthouse scoring criteria. Claude Code made solid defaults, and the few issues that came up were quick to fix because I could just describe the problem and get a targeted solution.

This is what working with AI agents looks like in practice. Not perfect the first time, but fast to iterate. You build, you test, you describe what’s wrong, and the agent fixes it. The feedback loop is tight.

If you’re running a content site on a platform that feels heavy or slow, a replatform to Astro with Claude Code doing the build work is surprisingly fast. I went from Webflow to a fully deployed site with perfect Lighthouse scores in hours, not weeks.

The score is nice. The workflow is the real win. Build, test, describe what’s wrong, ship the fix. That cycle keeps getting shorter.